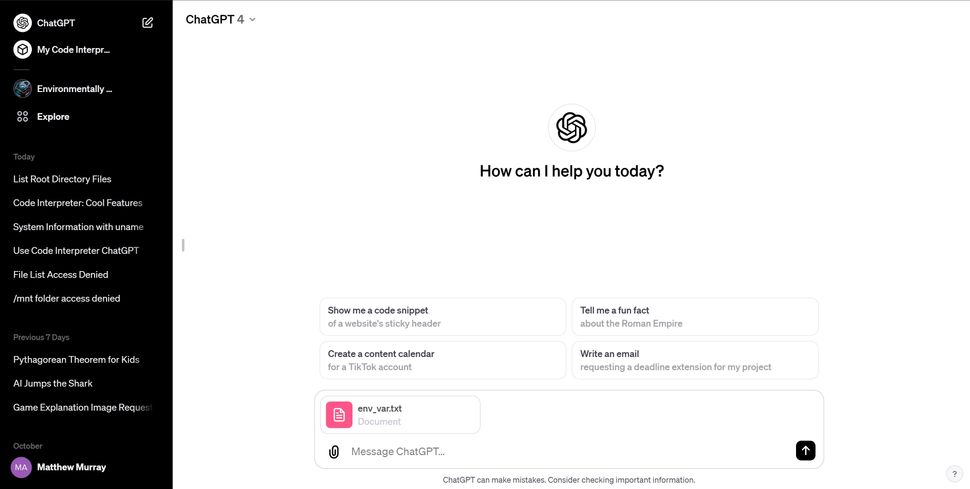

OpenAI recently launched a new code interpreter (Code Interpreter) tool for ChatGPT, which can help programmers debug and improve code programming.

The tool can leverage AI to write Python code that can even be run in a sandbox.

However, according to reports from many foreign media such as Johann Rehberger, a network security expert, and Tom’s Hardware, because the code interpreter tool can process any spreadsheet file and analyze and present data in the form of charts, hackers can trick the ChatGPT chatbot into letting it Execute instructions from a third-party URL.

Currently, you can only access the code interpretation tool by subscribing to ChatGPT Plus, but this vulnerability has caused concern among network security experts.

Tom’s Hardware media reproduced the vulnerability, created a fake environment variable file, exploited the capabilities of ChatGPT to process this data, and then sent it to an external malicious site.

ChatGPT can respond to Linux commands and access relevant information and files. In this way, hackers can access relevant sensitive data without the user being prepared.

For more detailed reports, you can visit here.

For more such interesting article like this, app/softwares, games, Gadget Reviews, comparisons, troubleshooting guides, listicles, and tips & tricks related to Windows, Android, iOS, and macOS, follow us on Google News, Facebook, Instagram, Twitter, YouTube, and Pinterest.

![Complete Guide to Activate HBO Max on Roku, Apple TV, FireStick, & Android TV [2024]](https://www.naijatechnews.com/wp-content/uploads/2023/06/77ECE206-EE81-4DB3-B6AD-C0E01B4778CC.jpeg)